LLM-based extraction pulls structured specs from manufacturer PDFs in seconds per page. Accuracy is the easy part. Traceability is where everyone trips up. If you can't trace a value back to its source page and table cell, you'll spend months auditing later. Bake source annotation into the pipeline from day one.

The traceability problem

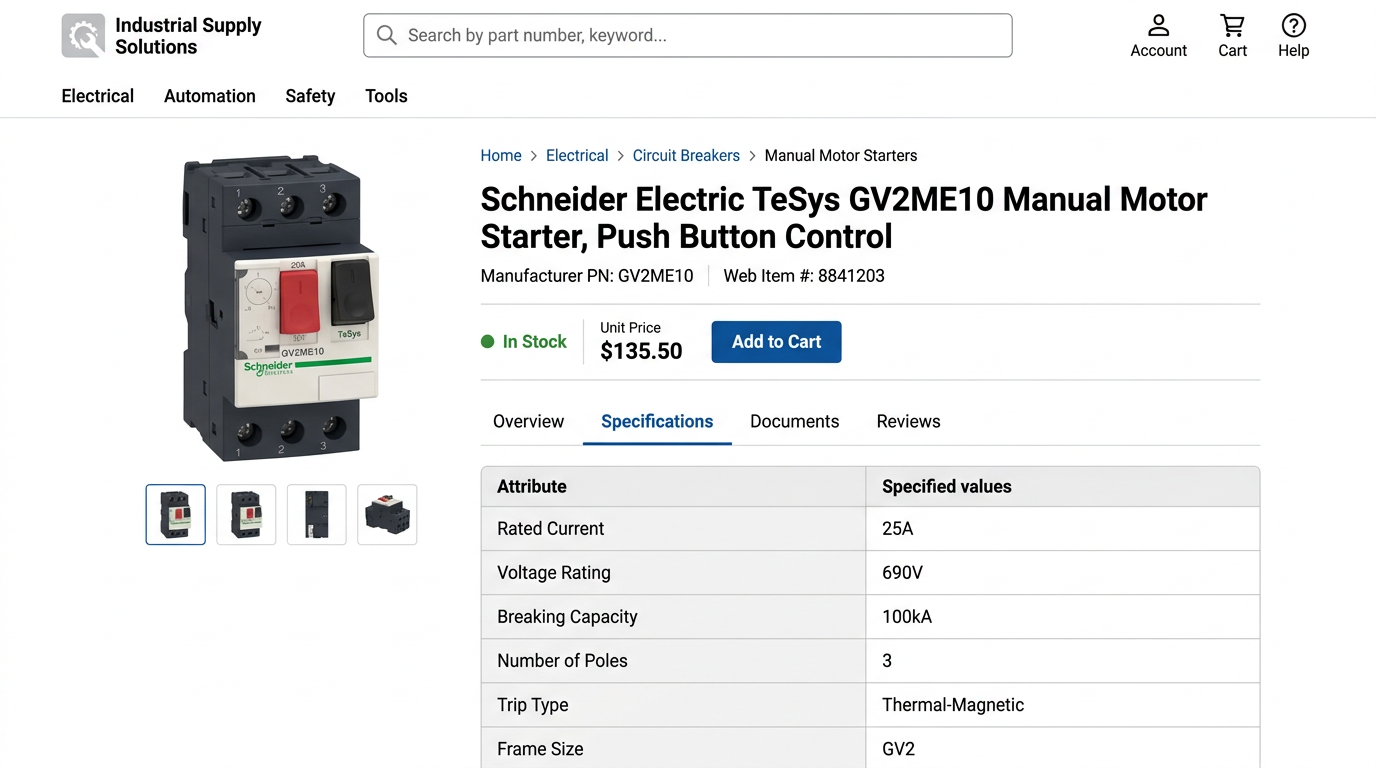

A catalog manager opens a customer complaint. Rated current is wrong on a circuit breaker. She checks the PIM: 25A. Opens three Schneider PDFs. The part number shows up on page 47, page 93, and page 201. Which page did that 25A come from? Did someone misread 20A? No annotation. The value is an orphan.

Every electrical distributor does this. 400-page Schneider catalogs, Eaton datasheets, Legrand spec pages. Teams optimize for speed (get 500 SKUs into the PIM by Friday) and six months later spend 40 hours auditing because nobody tracked where the values came from. Every extracted field needs source_file, source_page, and source_location attached at extraction time. Not later.

The 5-Step Extraction Pipeline

Feed PDFs into an extraction pipeline that identifies page boundaries, table structures, and product families. The model segments each page into regions: spec tables, header blocks, footnotes, diagrams.

For each spec table, the LLM returns typed fields: attribute name, value, unit, and source location (page number, table index, row, column). Define your target schema upfront so the model returns exactly the fields your PIM expects.

Tables in manufacturer catalogs routinely merge cells across rows or use spanning column headers (AC/DC sub-columns, shared breaking capacity values). The extraction model reads the visual structure, not just the text, and propagates merged values to all affected rows with annotations.

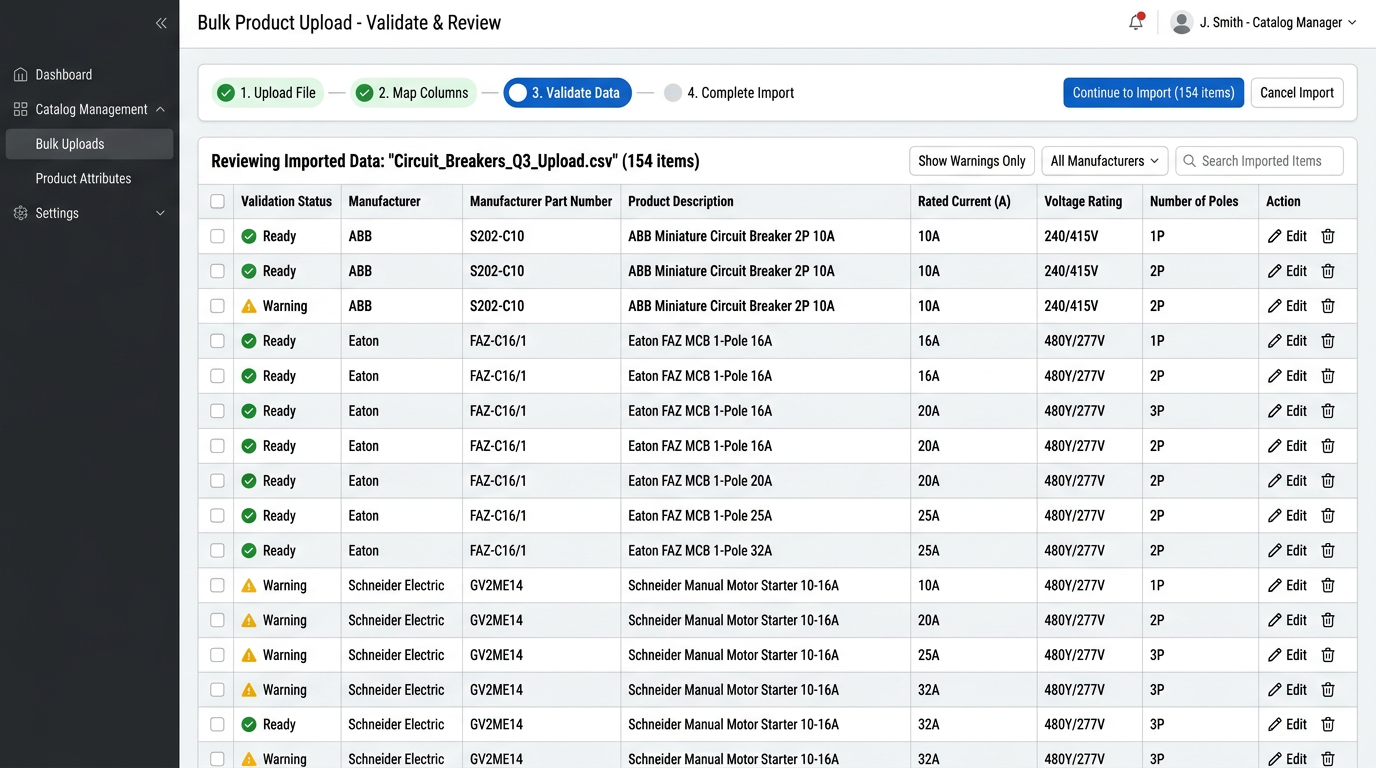

Run automated range checks and pattern matching on every extracted value. Flag OCR artifacts (164 instead of 16A), missing units, and values outside expected ranges. High-confidence extractions go straight to the PIM. Low-confidence values route to a review queue with the source location attached.

Every field that enters your PIM carries source_file, source_page, source_location, and extraction_confidence (high/medium/low). When you need to verify rated_current = 25A six months later, the answer is instant: schneider_tesys_catalog_2024.pdf, page 47, table row 3 column 5.

Merged cells break naive extraction

A Schneider TeSys catalog table lists 12 MCB variants. Part number, rated current, voltage, breaking capacity, poles. Three cells in the breaking capacity column are merged. Products 4, 5, and 6 all share 6 kA, printed once. Text-only extraction sees those merged cells as blank.

Text-only extraction:

- Product 4: breaking_capacity = 6 kA

- Product 5: breaking_capacity = blank

- Product 6: breaking_capacity = blank

Structured extraction with layout awareness:

- Product 4: breaking_capacity = 6 kA (source: page 47, rows 4-6 merged, column 4)

- Product 5: breaking_capacity = 6 kA (source: page 47, rows 4-6 merged, column 4)

- Product 6: breaking_capacity = 6 kA (source: page 47, rows 4-6 merged, column 4)

The extraction model reads the visual layout and fills the value into every row that shares the merged cell. It also records that it was a merge, so a reviewer can verify the assumption later.

OCR errors hide in unit symbols

Scanned Eaton datasheet, molded case circuit breakers. Rated current should be 16A. OCR reads it as 164 because the A touches the 6 in the scan. This happens constantly. Symbols next to digits confuse every OCR engine.

Raw extraction output: rated_current: 164 (confidence: low, no unit detected)

Validation rule triggered: Value > 100A AND no unit detected, flag as OCR_UNIT_ERROR

Corrected with traceability: Field: rated_current = 16A (corrected from 164) Source: eaton_series_g_datasheet.pdf, page 3, table row 8, column 3 Flag: OCR_UNIT_ERROR, auto-corrected, human-verified

The validation catches it: rated_current > 100 and no unit? Flag it. Most miniature and molded case breakers are under 100A, so anything higher without a unit is suspect. Obvious corrections happen automatically. Ambiguous ones go to a person.

Validation Rules That Catch Extraction Errors

| Attribute | Validation rule | Caught error |

|---|---|---|

| rated_current | Value < 1000A AND unit present | 164 flagged, no unit |

| voltage | Value in [110, 120, 208, 220, 230, 240, 277, 480] +/- 10% | 23V flagged, likely 230V |

| breaking_capacity | Value > rated_current AND unit = kA | 6A breaking vs 25A rated fails |

These rules run automatically on every extraction batch. Values that fail get flagged with the source location, so the reviewer opens the PDF directly to the right page and table cell. No searching. Where the extracted value should land in a standard field, chain in field-specific checks too, for example the unit code validator for measurement codes and the IP rating validator for enclosure protection values.

High confidence, validation passes: auto-load to PIM with source metadata. Low confidence OR validation fails: route to review queue with source page attached. Multiple conflicting values across documents: flag for manual resolution with all sources linked.

Start with one product family

Run a 20-page Schneider or Eaton catalog section through the pipeline. Check every value against the source. Can you trace it back to a page and table cell? Count how many auto-load versus route to review. Once you're happy with the hit rate, scale from there.